Vietnamese Studies in the Age of Digital Humanities and AI:

Digitizing Vietnam is an inter-institutional hub aimed at harnessing the power of digital humanities and AI for the advancement of all aspects of Vietnamese Studies. We seek to strengthen all fields of Vietnamese Studies through novel digital collections, innovative research hubs focused on digital and AI tools of analysis, a pedagogical archive for teaching Vietnam at all levels, and an outreach portal aimed at sharing knowledge on all aspects of Vietnam with the general public. Please join us in Digitizing Vietnam through Collections, Research, Pedagogy, and Outreach.

Collections

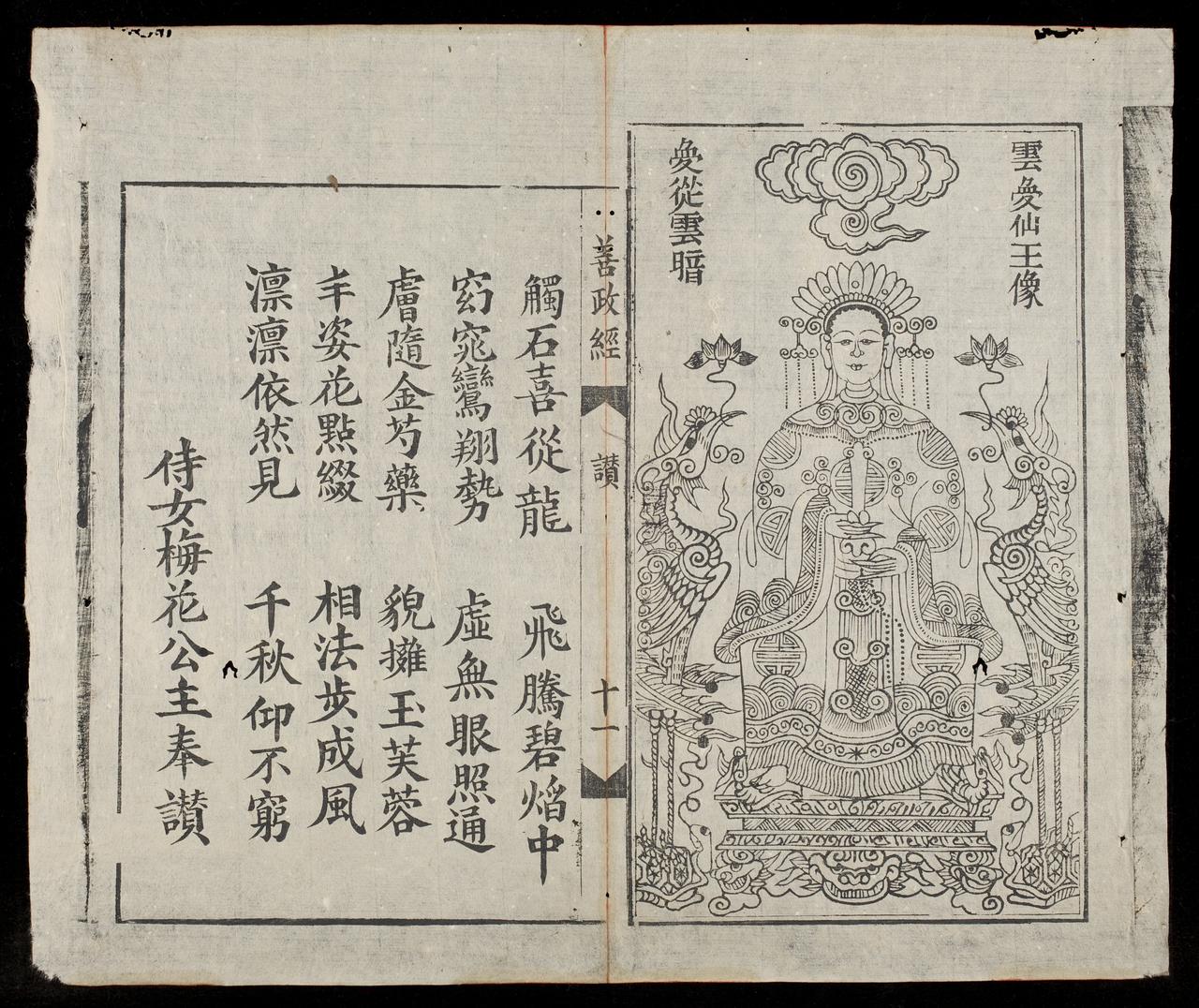

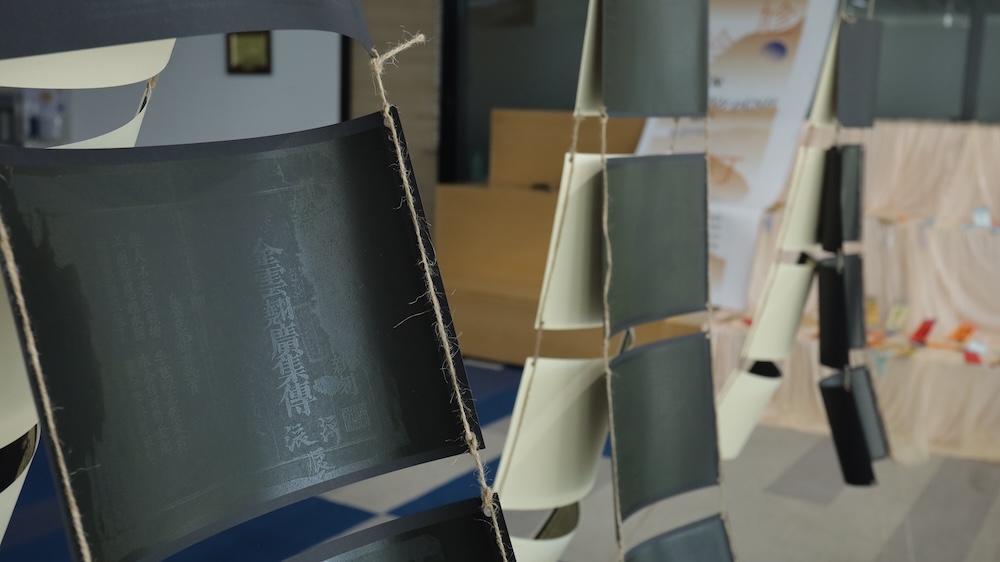

Explore a range of free-access digitized collections focused on various aspects of premodern, modern, and contemporary Vietnam.

Research

Explore, create, or contribute to an innovative research hub, each organized around a scholarly topic or theme. Hubs include curated collections, resources, and cutting-edge digital tools designed by our scholarly community to conduct research using digital, computational, and experimental AI methods.

Pedagogy

Discover and teach Vietnam Studies with impact. Explore curated syllabi, lesson plans, and multimedia resources designed to support innovative and inclusive learning experiences.

Outreach

Our outreach initiatives aim to bridge academic research with broader communities through diverse resources, including podcasts, video lessons, curated primary materials, and featured interviews with a diversity of scholars on Vietnam.

Digitizing Vietnam originated with the dissolution of the Vietnamese Nôm Preservation Foundation and the donation of all its archival materials to the Vietnamese Studies Program and the Department of East Asian Languages & Cultures at Columbia University in 2018. Subsequently, the Vietnamese Studies Program and the Weatherhead East Asian Institute at Columbia partnered with Fulbright University of Vietnam to begin designing an inter-institutional hub dedicated to advancing digital humanities research in all fields of Vietnamese Studies. In 2023, we were awarded a Henry Luce Foundation seed grant. We staged a soft launch of materials in the spring of 2025 at Fulbright University (Hồ Chí Minh City) and a full release at Columbia University in April 2026. Our vision is to develop digital and AI technologies for the advancement of all aspects of research on Vietnam.